Rows: 6,871

Columns: 7

$ school <fct> 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 2, 2, 2, 2, 2, 2, 2, 2, 2,…

$ student <dbl> 6701, 6702, 6703, 6704, 6705, 6706, 6707, 6708, 6709, 6710, 67…

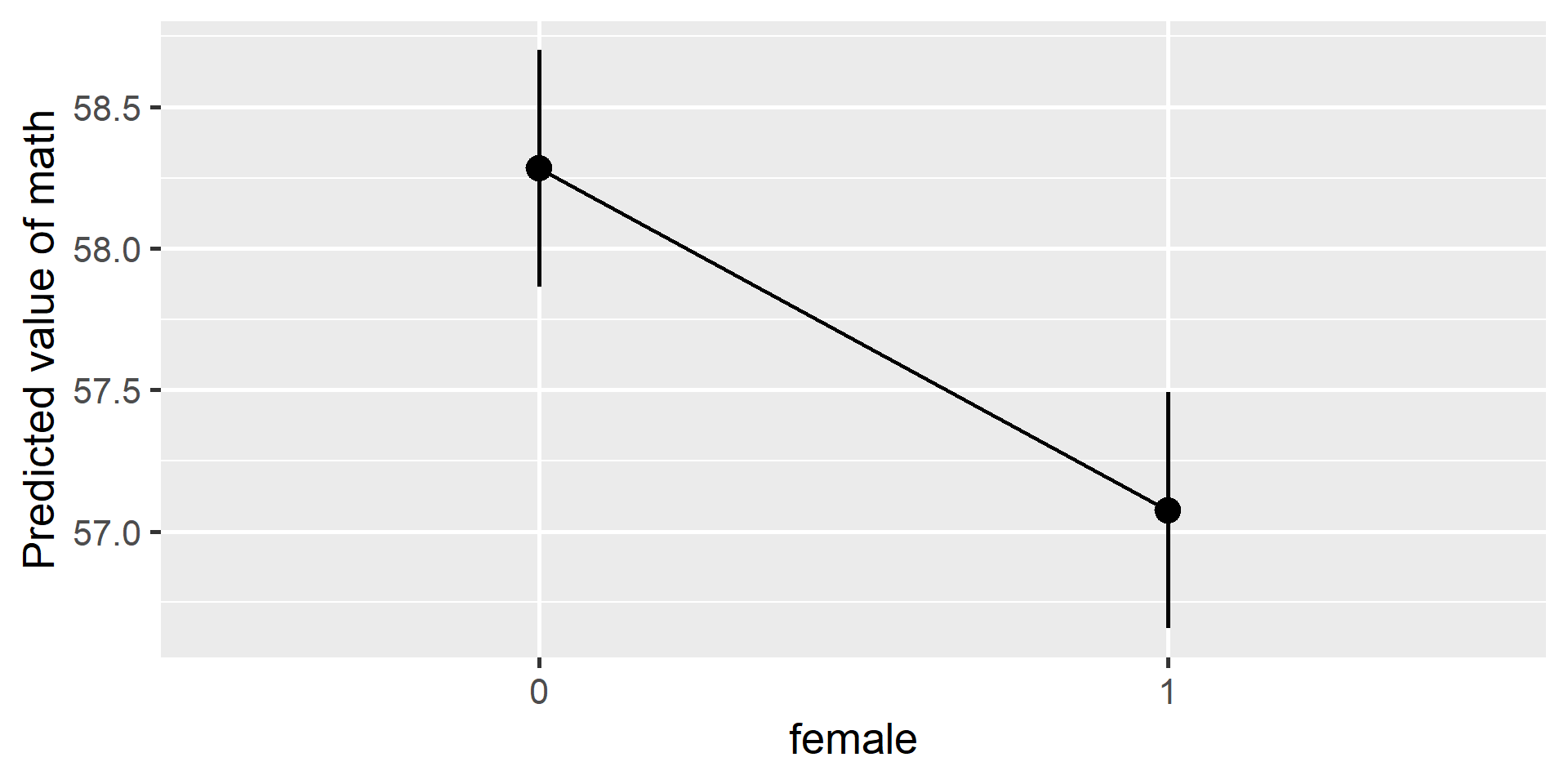

$ female <dbl> 1, 1, 1, 0, 0, 0, 0, 1, 0, 1, 0, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0,…

$ ses <dbl> 0.586, 0.304, -0.544, -0.848, 0.001, -0.106, -0.330, -0.891, 0…

$ math <dbl> 47.1400, 63.6100, 57.7100, 53.9000, 58.0100, 59.8700, 62.5556,…

$ puniv <dbl> 0.08333333, 0.08333333, 0.08333333, 0.08333333, 0.08333333, 0.…

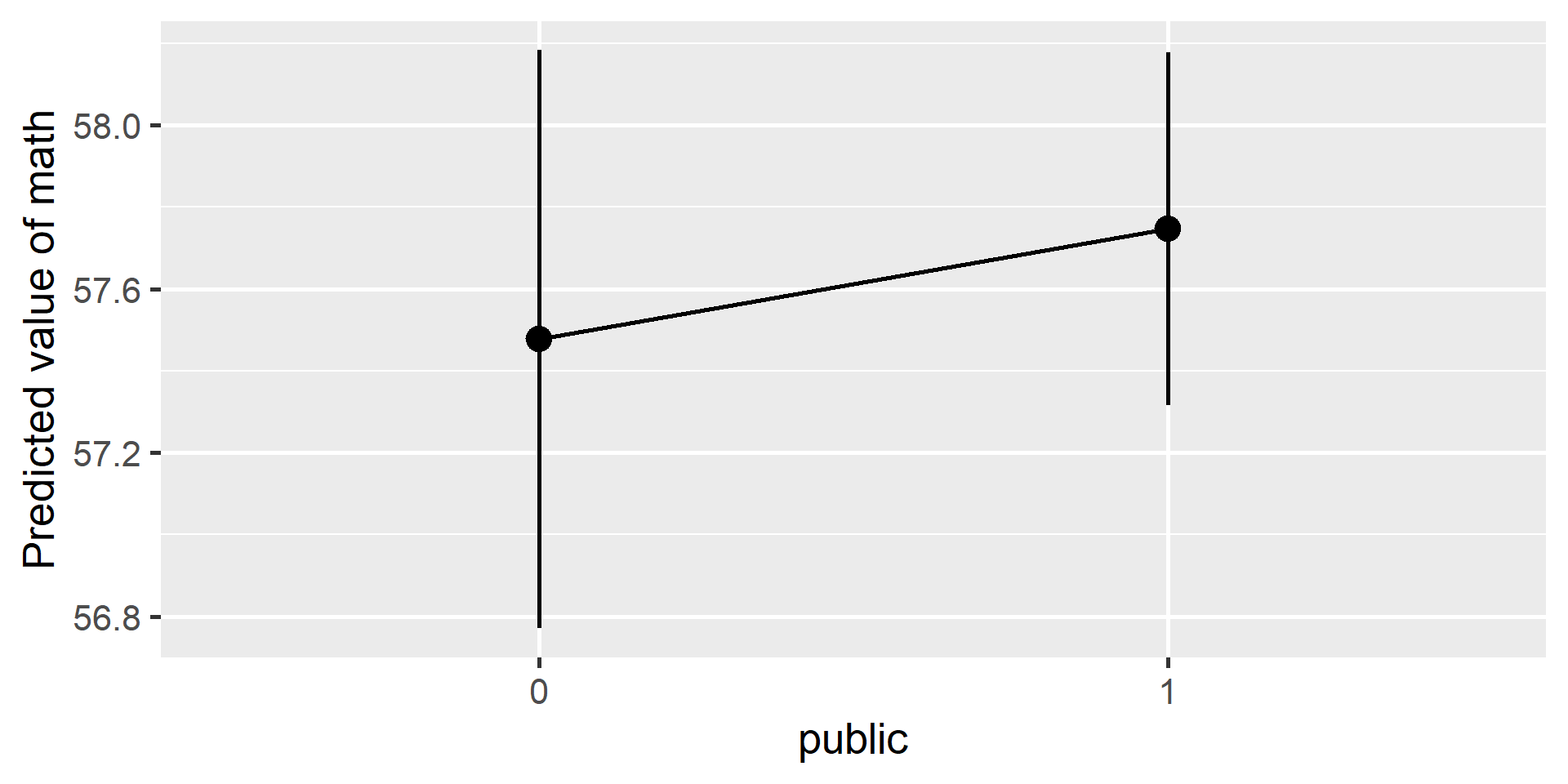

$ public <fct> 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,…